The digital landscape of 2026 is characterized by a fundamental shift in how information is discovered, processed, and consumed, marking a departure from the traditional human-centric browsing habits that defined the previous decade. As artificial intelligence agents and large language models increasingly serve as the primary intermediaries between businesses and their prospective clients, the standard metrics of success for a website redesign have evolved significantly. While aesthetic appeal and user experience remain relevant for the final human point of contact, the structural integrity and machine-readability of a digital asset have become the paramount factors in determining whether a brand even appears in the conversational queries of modern search ecosystems. Consequently, organizations must evaluate their digital presence not merely as a visual portfolio, but as a structured database that is capable of being ingested and understood by sophisticated algorithms that prioritize semantic clarity over stylistic choices.

The Obsolescence of Aesthetic-First Design Methodologies

In the contemporary business environment, the traditional preoccupation with "pretty" websites has proven to be an insufficient strategy for maintaining market relevance, primarily because visual design alone does not communicate value to the automated systems that now govern discovery. While a visually stunning interface may provide psychological validation to stakeholders, it frequently obscures the underlying data if not accompanied by a rigorous technical framework; furthermore, the proliferation of AI-driven browsers and personal assistants means that many users may never actually interact with the visual layer of a site until they are already deep within the conversion funnel. This shift necessitates a transition toward a more holistic approach to Web Design, where the visual presentation is viewed as a secondary layer built upon a foundation of machine-accessible information.

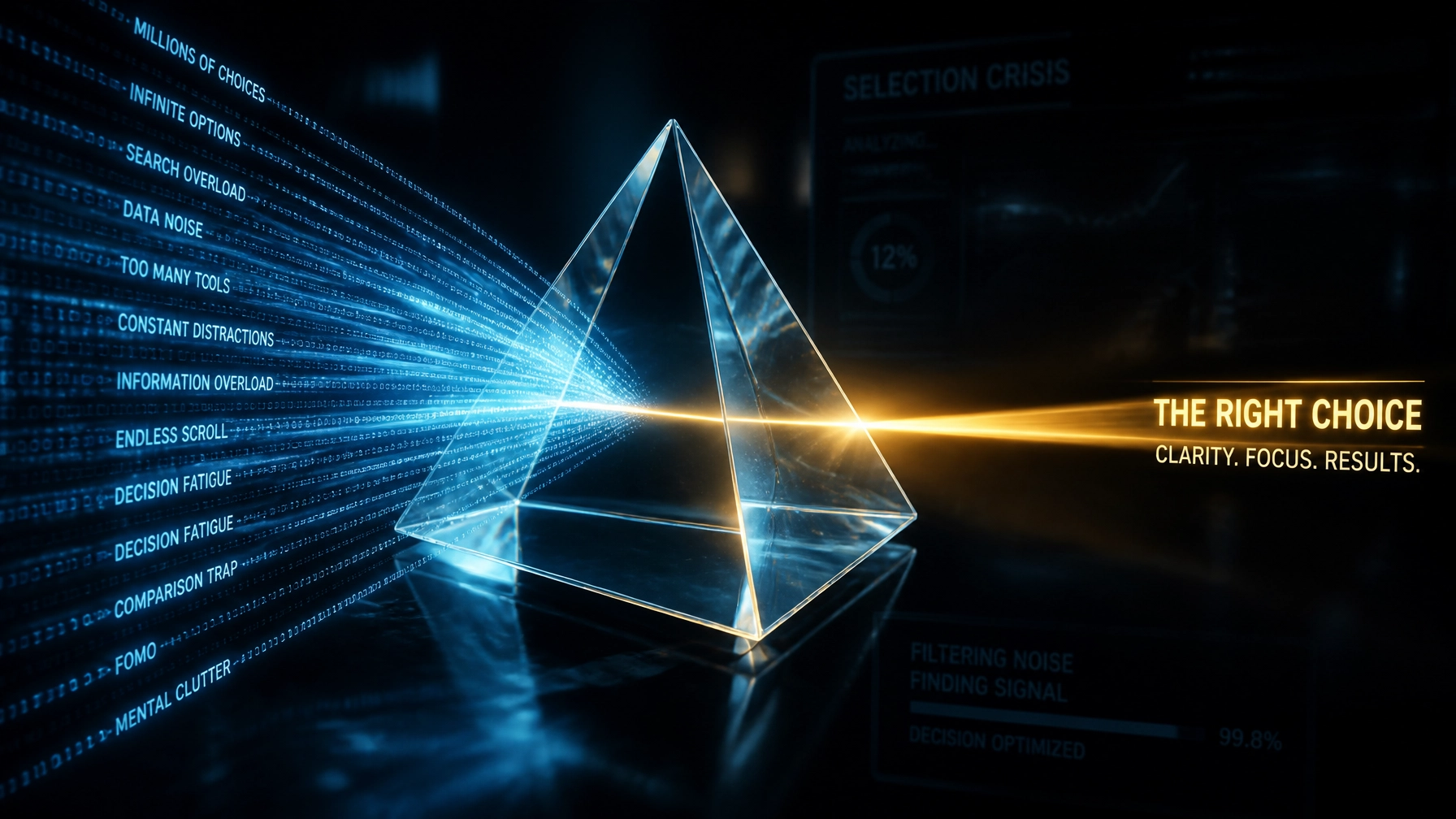

The challenge facing many contemporary organizations is the legacy of prioritizing flash over function, a methodology that has resulted in millions of websites appearing modern to the human eye while remaining essentially invisible to the neural networks that power search generative experiences. As these AI systems attempt to parse a website to answer a user's specific intent, they are often hindered by bloated code, non-semantic containers, and a lack of structured metadata, which ultimately leads to the business being overlooked in favor of competitors whose digital architecture is optimized for machine comprehension. Therefore, a website redesign in 2026 is only a viable investment if it actively addresses these invisible barriers to entry, ensuring that the brand's core offerings are presented in a language that both humans and machines can interpret with equal precision.

The Architecture of AI-Readability: Semantic Integrity and Structured Data

The concept of AI-readability encompasses several technical disciplines, ranging from the implementation of advanced Schema.org vocabularies to the strict adherence to semantic HTML5 standards that allow an algorithm to identify the specific nature of each content block. By utilizing semantic elements such as , , and , developers provide a roadmap that enables AI agents to distinguish between primary service descriptions and secondary navigational links, thereby ensuring that the most relevant information is prioritized during the indexing phase. Moreover, the integration of structured data has moved beyond simple rich snippets to become the primary mechanism through which organizations declare their relationships, products, and geographical relevance to the global knowledge graph.

Structured data, when implemented correctly, serves as a bridge between the unstructured text favored by human readers and the organized datasets required by artificial intelligence. By providing explicit context: such as defining a price point as a numerical value within a specific currency or identifying a person as a key executive with specific credentials: businesses enable AI to synthesize their information into direct answers for users. This level of technical sophistication is no longer optional; it is the fundamental requirement for survival in a Digital Marketing landscape where the goal is to be the "source of truth" for automated queries. As such, any redesign initiative must prioritize clean, efficient code that minimizes the cognitive load on the crawlers that are tasked with interpreting the site's purpose and value.

The Transformation of Search: From Indexing to Understanding

The evolution of search engines into "answer engines" has fundamentally altered the incentives for web development, as the objective has moved from achieving a high rank on a list of blue links to becoming the primary citation for a generative response. This transition is driven by the realization that users no longer wish to hunt for information across multiple tabs; instead, they expect their AI assistants to aggregate, summarize, and present the most accurate solution to their inquiries in a conversational format. Within this context, the role of a website has shifted from being a destination to being a reliable data provider, whereby the site's authority is determined by the clarity and accessibility of its content hierarchy.

Furthermore, the emergence of "Search Generative Experience" (SGE) and similar technologies means that the traditional silos of SEO are being broken down in favor of a more integrated approach that emphasizes topical authority and semantic relevance. Organizations that fail to adapt their digital assets to this new reality risk total exclusion from the information ecosystem, as AI agents will naturally gravitate toward sources that offer the least resistance to data extraction. Consequently, a redesign in 2026 must be viewed through the lens of data portability and technical agility, allowing the brand's message to permeate various platforms and devices without losing its essential meaning or context.

The Human-AI Hybrid Experience: Balancing Dual Demands

While the emphasis on machine-readability is critical, it must not come at the total expense of the human experience, as the final conversion still occurs within the mind of a person who values trust, aesthetics, and ease of use. The modern challenge for agencies like JDG.AGENCY is to navigate the delicate balance between these two audiences, creating websites that are both technically transparent for AI and emotionally resonant for humans. This requires a synergistic approach where the backend structure supports the frontend presentation, ensuring that the speed, accessibility, and intuitive flow of the site enhance the brand's credibility while the hidden metadata handles the heavy lifting of discovery.

This dual-track strategy involves a deep understanding of interaction design, whereby the user's journey is mapped with the same precision as the site's data schema. By optimizing for interaction-to-next-paint (INP) and other core web vitals, businesses can satisfy the technical requirements of modern search algorithms while simultaneously providing a friction-free environment for the human visitor. In conclusion, the websites that will dominate the 2026 landscape are those that treat human and machine visitors as equally important stakeholders, leveraging high-performance Web Hosting and robust technical architecture to deliver excellence to both.

The JDG.AGENCY Methodology: Harmonizing Visual Excellence with Technical Precision

Recognizing that many business owners are overwhelmed by the rapid pace of technological change, JDG.AGENCY has developed a proprietary redesign methodology that specifically targets the requirements of the 2026 digital economy. Our approach begins with a comprehensive audit of an organization's existing data architecture, identifying the structural weaknesses that are preventing AI agents from fully comprehending the brand's value proposition. By rebuilding the digital presence from the ground up with a focus on semantic HTML and bespoke Schema implementation, we ensure that our clients are not just visible, but are positioned as authoritative sources within their respective industries.

Moreover, our team understands that a successful redesign is not a one-time event but an ongoing evolution that requires constant monitoring and adjustment as AI models continue to advance. By offering comprehensive solutions, including custom CRM development and integrated booking systems, JDG.AGENCY empowers organizations to capture the traffic generated by their AI-ready websites and convert it into tangible business growth. As businesses look to get a project quote for their next redesign, the primary question must not be how the site looks, but how effectively it communicates its existence to the intelligent systems that now define the modern marketplace.

Navigating the Future of the Programmable Web

As we look toward the future, it is evident that the internet will continue to become more fragmented and specialized, with artificial intelligence serving as the glue that connects disparate data points into a cohesive user experience. In this environment, the "programmable web" requires a high degree of technical standardization, and those organizations that embrace these standards will find themselves at a distinct competitive advantage. A website redesign is no longer a cosmetic update; it is a strategic repositioning of the company's intellectual property to ensure its survival in an age where algorithms are the new gatekeepers of commercial success.

Ultimately, the transformation of the web in 2026 reflects a broader trend toward efficiency and intelligence in all aspects of business operations. By investing in a redesign that prioritizes AI-readability, organizations are not only future-proofing their digital assets but are also contributing to a more organized and accessible global information network. As the boundaries between the physical and digital worlds continue to blur, the role of the website as the central hub of a brand's identity remains more relevant than ever: provided it is built on a foundation that the future can actually read.